Surfing, Continuous Improvement, and AI

I’ve written about surfing before but had neglected to mention the most excellent Surf Simply in Nosara, Costa Rica. While the New York Times has written up Surf Simply not once, but twice, as have others, it’s really hard to capture what makes the experience so unique.

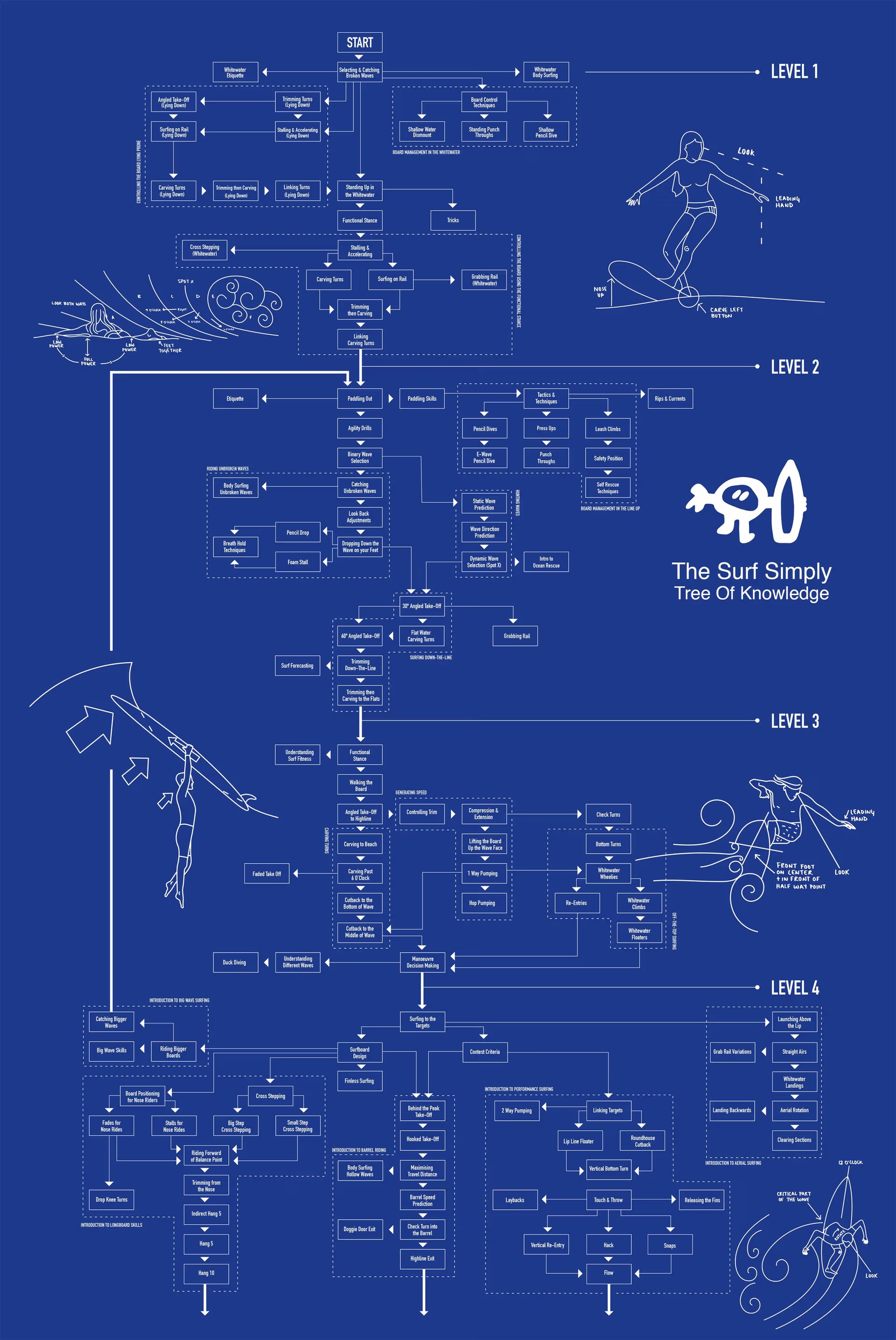

Surf Simply starts with the still revolutionary idea that surfing is a coachable sport. Their “Tree of Knowledge” breaks surfing into a teachable progression of skills, each one with multiple — often dozens — of different ways to learn, practice, and build mastery. I’ve been deeply involved with education and learning theory from the Second Life days and Surf Simply’s pedagogy is the best at teaching anything I have ever seen.

But that’s not the reason for this post. Instead, a different aspect of Surf Simply feels incredibly relevant to discussions about how to properly integrate Agentic AI into coding and other production flows.

Thinking like a surf camp

Talk to anyone who’s been to Surf Simply and they will gush about the unbelievable level of service, the anticipation, the sense of everything working together to make your week about achieving whatever surf goals you have. It’s full on Jane McGonigal’s pronoia. It’s almost inconceivable that any group of leaders or coaches — even a group as remarkable as Surf Simply’s — could have just created this.

When you ask them, you get a simple answer. Continuous improvement, blameless problem solving.

There’s a Standard Operating Procedures manual that covers everything. Every aspect of the resort. Travel arrangements. Coaching. Maintenance. Everything. It’s pretty massive.

But more than that, it includes all the mistakes. Everything that has gone wrong. And, because the entire team focuses on “fix the problem, then fix what caused the problem so it never happens again”, the SOP is constantly evolving with new and better information.

So Surf Simply is continuously improving. And because they solve mistakes blamelessly, no one covers them up and the team works together to really solve them.

This is how great restaurants operate, too. If you’ve ever spent time in the kitchen of a great restaurant, the staff notes every plate that comes back with food on it. Did the kitchen misplate it? Cook it incorrectly? Use subpar ingredients? Make a portion error? Not just a commitment to service and anticipation, but a commitment to constantly be learning, improving, and preventing the next problem.

The tech side of things

Great incident responses and post mortems. Effective critiques. O11y. A constant drive to ensure every part of the product, infra, and team is able to continuously improve.

I was thinking about all of this while reading Mario Zechner’s post “Thoughts on slowing the fuck down”. His priors are pretty clear:

While all of this is anecdotal, it sure feels like software has become a brittle mess, with 98% uptime becoming the norm instead of the exception, including for big services. And user interfaces have the weirdest fucking bugs that you’d think a QA team would catch. I give you that that’s been the case for longer than agents exist. But we seem to be accelerating.

And…

We have basically given up all discipline and agency for a sort of addiction, where your highest goal is to produce the largest amount of code in the shortest amount of time. Consequences be damned.

OK, coolio. What I found interesting was his frustration with using the tools we’d use in the real world to fix these kinds of problems when inexperienced team members caused them.

Now you can try to teach your agent. Tell it to not make that booboo again in your AGENTS.md. Concoct the most complex memory system and have it look up previous errors and best practices. And that can be effective for a specific category of errors. But it also requires you to actually observe the agent making that error.

And…

With an orchestrated army of agents, there is no bottleneck, no human pain. These tiny little harmless booboos suddenly compound at a rate that’s unsustainable. You have removed yourself from the loop, so you don’t even know that all the innocent booboos have formed a monster of a codebase. You only feel the pain when it’s too late.

I already wrote about how much I disagree with the o16g critique that “the backlog keeps our code clean” and this critique strikes me in the same way. “Sure, we were fine being sloppy, so long as we were sloppy and slow.”

I reject that path forward. I want documentation for agents and people to understand what the code should do. O11y so we know what the hell is actually going on. Service Level Objectives so that we can prove whether a change was actually good for our users.

But what about the complexity trap? As Mario notes:

Through the grapevine you hear more and more people, from software companies small and large, saying they have agentically coded themselves into a corner.

Guess what, plenty of products humans lovingly built over the years out of artisanal, organic, grass-fed code have fallen into this trap, too. Scaling of audience, team, and data has been the death of plenty of products and companies.

It’s part of why I adore the Tree of Knowledge so much — if we can break surfing down into a directed graph of small, largely independent actions, I’m pretty sure we can break down most products as well.

I’m confident Agentic AI can partner with us to discover those structures very, very effectively.

The reality

Once we have AI table stakes and have our first working model for how to handle permanent and temporary code, then the real work begins.

It’s the reason I wrote the Outcome Engineering Manifesto. Not because Agentic AI can trivially build everything today. Hot take: it can’t. Yet. And it certainly is possible to go all-in on agentic right now — and not just as an excuse for cutting costs — and demolish your company.

But the far greater risk is to not be building systems now that can continuously improve, that can blamelessly explore root causes.

Because those companies and teams are going to be running laps around everyone else real soon now.