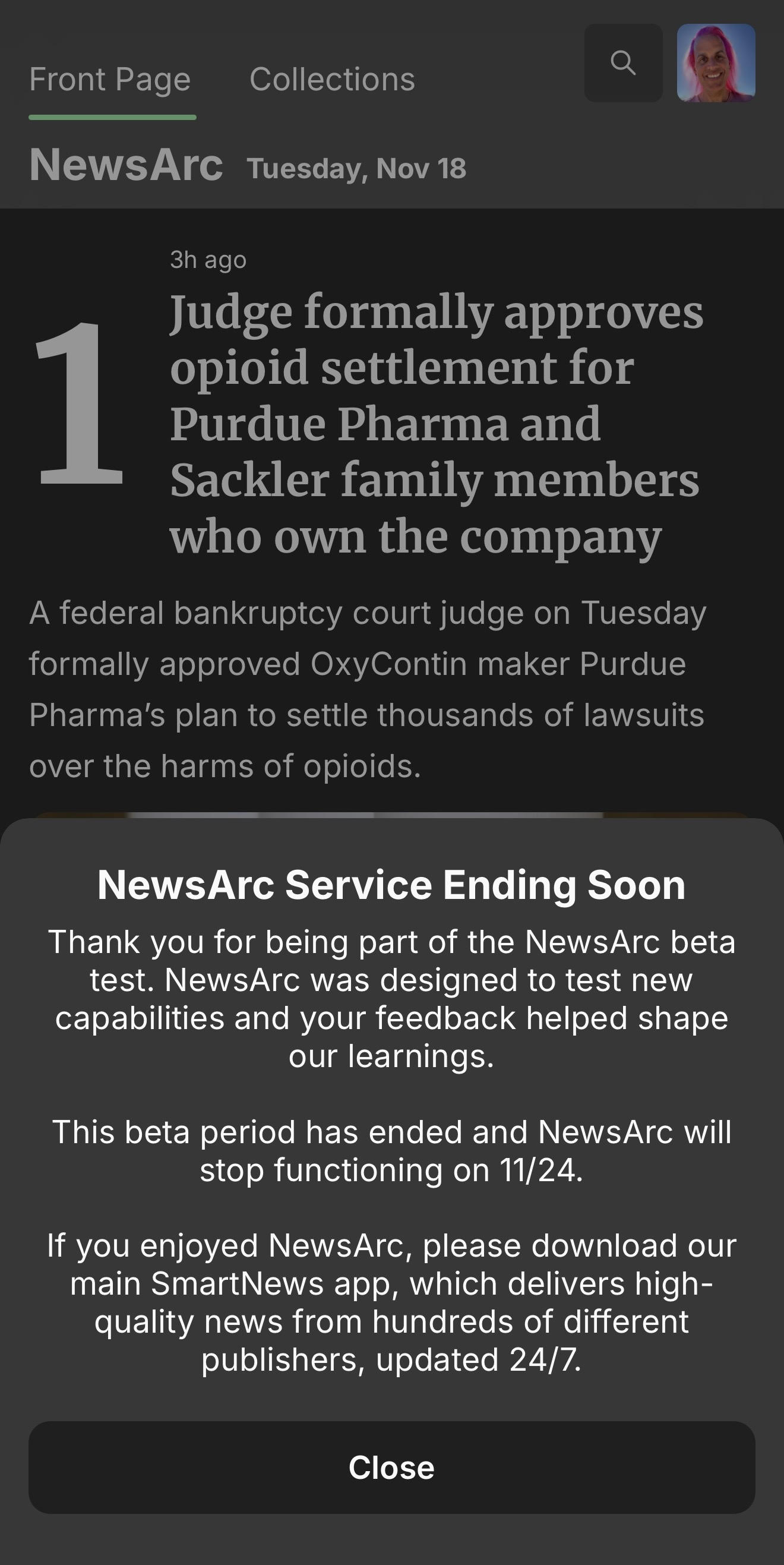

What a year. So many learnings, unexpected twists, and excitement for what’s ahead. It has been hard to write after the shutdown of NewsArc but as the New Year approaches, it’s time to get back on the horse. Thanks to everyone who’s shared feedback, errata, and thoughts on my posts this year — despite the writer’s block of the last month, it has been a real joy to be writing again and I hope to carry it into 2026.

So, what did I learn in 2025?

Write more

I’ve used long-form writing to communicate ideas, priorities, missions, and plans for decades. I’m not a designer or an artist, so if I’m trying to explain something complex, it’ll either be through code or writing. Despite that history, 2025 was a reminder that when I write more, everything is easier.

Take, for example, why I am so opinionated about simplicity and commonality being technical, product, and cultural virtues for startups. While it generally comes from my product development experience at various orgs, it’s also a very concrete outcome of the evolution in how I think about priorities and decision making.

Going back in time, two themes in decision making shaped most of my early adulthood:

- What choice is harder? (I went to high schools stocked with smart and competitive kids, so one way to increase the odds of getting the hell out of dodge was to pick the hardest paths and challenges.)

- Make the decision before the cost of indecision exceeds the cost of a bad decision (Thank you, Navy, for this lesson over and over again.)

The first one definitely gets you to interesting places, but isn’t ideal when managing and leading teams. As Philip liked to say “there are no style points.” The second one gets super messy if there isn’t alignment on costs and risks, plus Shrep has a phrase that changed my thinking about it.

So, I’ve updated my thinking somewhat, and now frame it as:

- Is this really a decision we have to make

- Strong opinions, loosely held (e.g. once a decision is made, commit to it completely until you get new data and obsessively hunt for new data)

These principles rely on a few unstated assumptions: a strong bias to action, that the costs of mistakes are manageable, and an acknowledgment of the inability to make cost estimations without clear, actionable goals. By reducing the cost of development, trying, and learning, you can more often try both options (point 1), thereby maximizing your rate of learning around the decisions you do make and minimizing the costs when you are wrong (point 2).

When those are unstated, I guarantee teams won’t understand why I’ve asked them to bias towards commonality and simplicity, why observability is critical, or the need to measure how resources are aligned with priorities.

Writing more means the connections and reasoning are out in the open. It creates space for rigorous debate, memorializes decisions, reduces relitigation, and leads to better decisions.

I knew all this, but 2025 was a year of learning it again. Put into practice — and with other leaders also writing more — the improvements in velocity, innovation, and product quality were obvious, repeatable, and sustainable.

So, duh, write more..

Everyone wants a simple solution to priorities

Unemployment means interviews — no matter how much you are trying to just catch your breath — and across startups, big public companies, and every scale in between, I was asked repeatedly:

How do you prioritize between product and engineering between Obviously Important Thing and Other Obviously Important Thing?

My thoughts on this directly intersect a related question Charity posted a little while ago:

If you’re a CTO, what do you do to make sure your most senior, trusted engineers are actively involved in making business-critical decisions, all down the line?

Starting with the first question, the answer is always to remember that for your business, product, feature, or whatever, there is always a best answer, an optimal set of priorities. How much time and resources are you going to spend trying to find them?

The first point — that there is a singular, best, optimal set of priorities and that it is knowable — is critical. Too often teams and companies get comfortable with the idea that it is impossible, unknowable, beyond the realm of mortal knowledge. This relaxes an iron constraint on planning and gives everyone an easy out.

Rather than doing the legitimately hard work around searching for a best decision — including what signals or data would impact assumptions, what strict tradeoffs between critical priorities could be acceptable — teams who plan without trying to find the best plan end up doing a ton of performative work that generates very little value. Plans that lack the rigor and authority to truly tie-break, to help managers at all levels make decisions that continuously improve progress against goals.

Why do companies screw this up? Because it requires 1) seriously hard work between busy, smart leaders who often have genuinely conflicting local priorities, 2) leaders to take personal responsibility for hypotheses, learnings, and outcomes in ways that looser plans do not, and 3) CEOs willing to make the final call with incomplete knowledge and not enough time to be sure. These are all real challenges. Even otherwise capable, senior teams who haven’t done the work to build partnership, collaboration, and joint decision making skills can fumble these tasks.

When interviewing or talking to peers, listen for questions that signal a lack of collective understanding, a lack of shared responsibility. If your boat is sinking, pointing out that the leak isn’t your part doesn’t keep you afloat. My favorite conversation starter that signals real problems?

“How do you prioritize between revenue and product quality?”

Think of all the issues this question signals — lack of understanding of LTV and user experience, lack of mature modeling of user roles and flows between them, implication that revenue and product is us vs them and in tension, etc. This is a mu moment — unask the question. Instead, solve the gaps that cause someone to want to ask such a poorly formed, incomplete question.

And this takes us back to Charity’s question. Absent the best plan with strict, unified priorities, how can engineers make good decisions and, more importantly, be a strong point of discovery for new data? When the plan is sloppy and doesn’t truly set priorities, more junior leaders and members don’t have the clarity to know they are safe to raise concerns, to point out where reality isn’t matching the plan.

In a sloppy plan, there’s wiggle room everywhere, leaders who shade and modify things, who don’t apply rigor to why the next hire or next $10k spend really does need to go elsewhere. In that environment, why would a senior engineer who’s learned there’s an inconvenient problem ever surface it? How can the team and org really know this concern is real vs general senior engineer Grumblefest (tm)?

Of course, that’s necessary but not sufficient, you also need all the other good habits around disagreement, safety, etc.

Beyond all that I have written about using AI in ranking, I’ve also been using AI in coding contexts a lot. The difference in one year is frankly astounding. At the start of 2025, AI could write a bit of very specialized code, some boilerplate.

Now? You’re probably making a mistake if you aren’t using coding LLMs for:

- Code review. Why aren’t you getting an AI look on every commit?

- Security reviews

- Performance optimization

- Expanding and validating test coverage

- Ports, refactors, and any coding tasks with high quality test coverage

- Pair programming without having to fight over the keyboard

- Documentation

- Optimizing your code and development process for both real-time and offline coding assistants

None of this is YOLO vibe coding. You still need to understand how the code works, but for a few dollars you can get powerful help on virtually any coding task.

And all of this is before all the places adjacent to coding — o11y is obvious — where LLM inference and interfaces are transforming how tools partner with us. Models small enough to embed make any interface smarter and more resilient. Models and systems large enough to understand your whole system should be transforming your understanding of it.

And this is as bad at coding, understanding, and product development as AI will ever be. 2026 is going to be very interesting.

I’m bad at not having a job

I was planning to just spend some time coding, cooking, and generally figuring out what’s next. That plan failed. Instead, super excited to be starting something both very different and very familiar in 2026. More to share soon!

And thank you for reading

After not writing in a long time, 2025 was 50,000+ words and 70+ posts. We’ll see how I do in 2026. Write more, indeed.